No One Makes the Charge of Arbitrariness Against Utilitarians

If one intuition works, why not two? or three? or a hundred?

I have been a moral realist for as long as I can remember. I think the reason is roughly this: it seems to me that certain things, such as pain and suffering to take the clearest example, are bad. I don't think I'm just making that up, and I don't think that is just an arbitrary personal preference of mine. If I put my finger in a flame, I have a certain experience, and I can directly see something about it (about the experience) that is bad. Furthermore, if it is bad when I experience pain, it seems that it must also be bad when someone else experiences pain. Therefore, I should not inflict such pain on others, any more than they should inflict it on me. So there is at least one example of a rational moral principle.

But I was not always an intuitionist: when I first learned of the theory, in the form espoused by W. D. Ross, I thought that it rendered ethics unacceptably arbitrary. Ross had given a list of several different 'prima facie duties' that we have, such as the duty to keep promises, the duty to avoid causing harm, the duty to show gratitude for benefits given to us, and so on. Ross had no account of why these things were all duties, other than that it just seemed so to most people; nor had he any account of how to resolve conflicts between prima facie duties in particular cases, other than to just use one's best judgment. It seemed to me that this rendered most moral judgments doubly arbitrary: there was arbitrariness both in the general principles of duty themselves, and in their application to particular circumstances.

I am not sure, now, why I thought these things. If someone had asked me, 'Do you think that every belief requires an infinite series of arguments in order to be justified?' I would surely have said no. So what was 'arbitrary' about Ross' ethical views could not have been the mere fact that he advanced some claims without argument. I think I would have been satisfied, or at least less inclined to make the 'arbitrariness' objection, if Ross had stated a single ethical principle from which all other ethical principles could be logically derived—as, for example, the utilitarians do. No one makes the charge of arbitrariness against utilitarians. But this makes little sense-if a single foundational ethical principle may be non-arbitrary, why not two? Or six? Or a hundred?

—Michael Huemer, Ethical Intuitionism pp. 250 - 251 (emphasis mine)

I’ve criticized utilitarianism in quite a few newsletters, comment and forum posts. If you’ve been following along, you might think it’s my favorite thing to do!

As an ethical intuitionist, I believe that you can learn about good and bad just by reflecting on your own intuitions. In other words, if something seems wrong then that’s at least some reason to think it’s wrong. It’s not that intuitions are perfect and it’s definitive proof that it’s wrong or that intuitions can’t be biased or that intuitions aren’t subject to cultural biases. I concede that! Intuitions aren’t perfect.

But intuitions have to be worth at least something. You can’t deny that intuition gives you evidence toward a conclusion without accepting some preposterous and self-refuting form of skepticism. If I say that all knowledge about the world is inferred from other knowledge about the world and intuitions are worthless as evidence, then I’m going to need to accept that it’s actually okay to have an infinite regress where proposition P1 is justified by proposition P2, which is justified by P3 and so on toward infinity. Or I’m going to need to accept that it’s okay to have circular reasoning where proposition P1 is justified by P2 and P2 is justified by a bunch of other propositions until we reach PN which is justified by P1 itself. I think both of these alternatives seem preposterous on their face, but they do have their intelligent defenders.

However, if you’re a utilitarian, you probably already think that we can have some foundational or self-evident moral knowledge. Particularly, we know that “suffering is bad” and that “happiness is good.” You also know that some amount of suffering can be traded off for some amount of happiness. The result is that utilitarians have a criteria about what we should do that incorporates both: the morally correct action is that which maximizes utility.1 Utilitarians try to map both suffering and happiness to a numerical value in order to make comparisons between choices in order to rank them. The highest utility action is the ethical action.

One issue I have with “self-evident” talk is that it sounds like intuition to me. My suspicions don’t seem that farfetched. The utilitarian that I am critiquing the most, Scott Alexander, believes that the meta-ethical justification for utilitarianism is actually from our intuitions. From his Consequentialist FAQ (emphasis mine):

1.2: Why care about moral intuitions?

Moral intuitions are people's basic ideas about morality. Some of them are hard-coded into the design of the human brain. Others are learned at a young age. They manifest as beliefs (“Hurting another person is wrong"), emotions (such as feeling sad whenever I see an innocent person get hurt) and actions (such as trying to avoid hurting another person.)

Moral intutions are important because unless you are a very specific type of philosopher they are the only reason you believe morality exists at all. They are also the standards by which you judge all moral philosophies; if the only content of a certain moral philosophy was “it's wrong to wear green clothes on Saturday", then you would not find this moral philosophy attractive unless it could justify itself by saying why wearing green clothes on Saturday affected other things that our moral intutions find more important. For example, if every time someone wore green clothes on Saturday, the world become a safer and happier place, then the suggestion to wear green clothes on Saturday might seem justified - but in this case the work is being done by a moral intuition in favor of a safer and happier world, not by anything about green clothes themselves. On the other hand, if a philosopher were to justify a moral theory that we should make the world a safer and happier place by appealing to the fact that it might make people wear more green clothes on Saturday, this would be ridiculous. So moral theories must end up grounded in our moral intuitions for them to work.

There will be an acceptance of intuition in one context—to reach utilitarianism—but a rejection of intuition in other contexts—to establish prima facie duties or make arguments for weak natural rights or argue against a utilitarian conclusion. If an intuition can count for evidence toward your desired hypothesis in one context, you cannot outright reject intuition as evidence against your hypothesis in another context. Intuitions must be able to be evidence both for and against your hypothesis.

Utilitarians treat intuition like Wittgenstein’s ladder—they use them to reach their position but then cast them aside as flawed in later contexts:

My propositions serve as elucidations in the following way: anyone who understands me eventually recognizes them as nonsensical, when he has used them—as steps—to climb beyond them. (He must, so to speak, throw away the ladder after he has climbed up it.) He must transcend these propositions, and then he will see the world aright.2

There is an appeal among utilitarians, as well as libertarians, to the idea of an aesthetically appealing and simplistic one sentence ethical theory which summarizes all of one’s beliefs. Perhaps this seems less arbitrary in some way. Having one single principle escapes self-contradiction, but such stringent definitions have to hold up even at extremes. But they don’t hold up in the sense of aligning with intuitions in certain situations. The utilitarian is put into a dilemma when a scenario strains the credulity of their hypothesis. They can bite the bullet and say the intuition is wrong and that this extreme scenario is entailed by utilitarianism or they can modify utilitarianism to avoid the conclusion.

They can also object to the idea that utilitarianism entails a counterintuitive conclusion. This is the most difficult to deal with because it usually involves objections about technicalities or secondary and tertiary effects or establishing bad norms which have a cascade effect. I respect these as legitimate objections, but it misses the point of the hypothetical. It reduces the probably to one of ambiguity and uncertainty. I can just keep trying to modify the thought experiment until it works.

It feels similar to how a student in a philosophy class might object that they would try to stop the trolley in the trolley problem by knocking it off the track—or they would try to untie the people before it hit them or they try to figure out what the people are like—are they evil people? Are there five murderers there? And you want to just say “You’re missing the point.” You’re supposed to sense an intuition, but I think I need to figure out how to be a better communicator to get this point across. It’s not about the technical details it’s about the intuition underlying it.

I think these intuitions are evidence. Treat them like it is what I’m trying to get across. But some people don’t think we can have foundational beliefs like this that just are. Saying "yeah, this is just true” and getting asked “how do you know?” and saying “it just seems true” actually seems really unscientific and unconvincing, but it is all we have. We must ground reality and ethical reality in our intuitive sense of what is right and what is wrong.

Utilitarianism is a hypothesis and intuition is evidence. It can’t be the case that all intuitions are correct just like a theory can’t explain everything. There exists counter evidence. If someone was told that Jesus Christ appeared to them in a dream and told them to go to church, this counts as evidence toward the existence of a Christian God. Perhaps it is extremely weak evidence, but it is still evidence. An atheist would believe it is merely a dream, granted but it is still evidence.

We couldn’t expect a theory to best incorporate literally all the evidence. Some evidence will probably count toward other hypotheses, but utilitarianism seeks to explain all moral facts with a one sentence theory. If I present enough counter-evidence or counter-intuitive conclusions from utilitarianism, you may have to update your priors. That is, if you think intuitions are evidence.

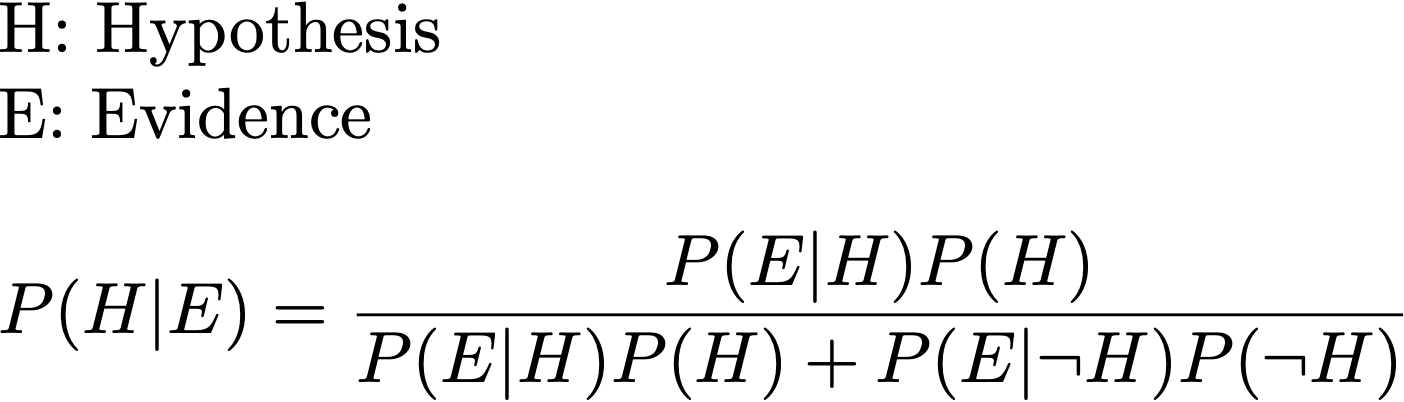

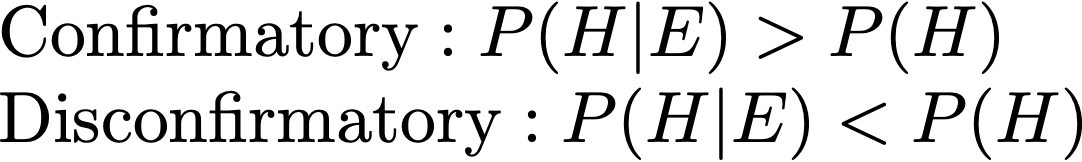

Rationalists should be familiar with Bayes’ rule:

If the evidence makes our hypothesis more likely, then it can be considered confirmatory evidence. If evidence makes our hypothesis less likely, it is regarded as disconfirmatory evidence.

If we accept that a class of evidence is acceptable to confirm our hypothesis in one context, we cannot reject that class of evidence as unacceptable in another context. If I can present to you counter intuitive aspects of utilitarian ethics, then you should lower your confidence of utilitarianism being the correct ethical theory and increase your probability that it is not. I think it is easy to produce intuitions that are go against utilitarianism. You can’t a priori reject intuitive reasoning if you use it to get to the utilitarian foundation.

If you accept something like “Suffering is bad” and “Happiness is good” on it being self-evident, then you have to accept other things as possibly being self-evident. If some other self-evident thing goes against your hypothesis, then you’ve got to lower the probability of your hypothesis rather than just saying “Look, this is a good reason why our intuitions aren’t reasonable.”

Some counter intuitive conclusions from utilitarianism include the repugnant conclusion, excessive obligations, a distinction between omission and commission, non-fungibility of persons, utility monsters, Pascal’s mugging, experience machines, and parental obligations. To lay all of these out in one post is a bit too much. I do have some arguments elsewhere about some of these ideas:

Some utilitarians believe that the morally correct action is that which maximizes total utility (total utilitarians) and some believe that the morally correct action is that which maximizes average utility (average utilitarians). Some have other more idiosyncratic beliefs.

Wittgenstein, L. (1998). Tractatus Logico-Philosophicus. §6.54.

image source: https://www.creativeboom.com/inspiration/hauntingly-beautiful-paintings-by-aron-wiesenfeld/

I have been accusing utilitarians of arbitrariness for over a decade, although it's only since I had ready access to DALL-E that I was able to make a blog post about it filled with pretty pictures: https://thingstoread.substack.com/p/atheism-or-utilitarianism

I do realize that in philosophy, intuition is often regarded as meaningful. How successful has philosophy been compared to other disciplines? In science, intuitions lead to hypotheses, which are tested with evidence. Intuitions are extremely useful, but they are most definitely not good evidence, because they are heavily biased. Intuitive thinking leads to Geocentrism, sympathetic magic, moralizing gods, and arguments like this:

> Saying "yeah, this is just true” and getting asked “how do you know?” and saying “it just seems true” actually seems really unscientific and unconvincing, but it is all we have. We must ground reality and ethical reality in our intuitive sense of what is right and what is wrong.

Nein, genug! Wovon man nicht sprechen kann, darüber muss man schweigen.