There is an amusing game that children play with an adult where they keep asking the same question over and over again until the adult can’t answer, resorting to “because I told you so” or “because that’s the way it is.”

A child could string together a series of questions. One example could be asking why we drive on the right side of the road, why we do what everyone else does, why we don’t want to hit another car, why we don’t want to harm others, why harming others is bad and why we should avoid bad things—and we have landed in metaethics where the questions aren’t so easy anymore. At this point the parents just say “because I said so”, while maybe taking a moment to acknowledge the profundity.

If we play that game with the question “How do you know?”, we’re going to have another problem. How do you know that the United Sates is not landlocked? How do you know there are oceans on both sides? How do you know you saw the ocean that time you went to California? How do you know the maps you’ve seen are correct? How do you know you have a good memory? …You can see how it starts to get hard.

“How do you know?” is just “what makes your belief justified?” Usually, people need some evidence and a reason to think the evidence is actually evidence. We can formalize this with the help of the Stanford Encyclopedia of Philosophy:

Principle of Inferential Justification (PIJ):

To be justified in believing P on the basis of E one must be (1) justified in believing E, and (2) justified in believing that E makes probable P.1

If you want to be justified in believing P, you really need to be justified in believing E also. If all knowledge is inferential, then you need some E2. And that E2 needs an E3 and that E3 needs an E4. Also, that justification E makes probable P needs justification and that justification needs justification and so on and so on.

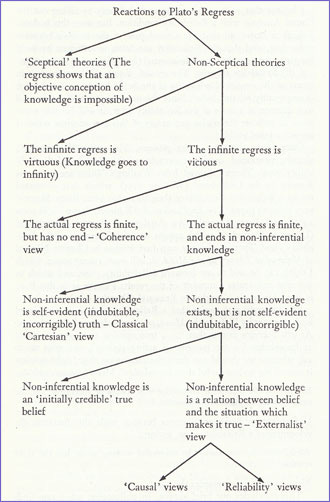

We can’t live like this. What is the solution? We can be skeptics and just say “Well, we can’t really know anything”, call the job finished and head home, but how can we be confident we won’t collide with traffic if we drive on the correct side of the road? It seems like we do actually know at least some things and have good reasons for believing them.

If I think that no beliefs are justified, how do I know that I’m justified in believing “We can’t really know anything?” The skepticism refutes itself; to believe in skepticism is to believe you have a justified true belief, something the skeptics reject.

We could say that there is justified true belief but it relies on circular reasoning and that’s okay. But if we want to justify P and we are using a long string of propositions leading back to P to do that, then when was P justified? Was it justified when we first wanted to justify it? And if so, why do we need to use that long string back to P?

I could say that actually an infinite regress is okay, but why would I trust one chain over the other? And what do steps 50, 60 and 70 of this infinite regress look like? If I start from “The things my eyes see are real” how do I go much further down the chain?

Ultimately, I step off the path at we can have have non-inferential knowledge. I think that something can seem true and, absent evidence to the contrary, that is a decent reason for thinking it’s true. And, as is the case with many other things, Michael Huemer persuaded me of his position on this matter called Phenomenal Conservatism:

The following is a recent formulation of the central thesis of phenomenal conservatism:

PC: If it seems to S that P, then, in the absence of defeaters, S thereby has at least some justification for believing that P

The phrase “it seems to S that P” is commonly understood in a broad sense that includes perceptual, intellectual, memory, and introspective appearances. For instance, as I look at the squirrel sitting outside the window now, it seems to me that there is a squirrel there; this is an example of a perceptual appearance (more specifically, a visual appearance). When I think about the proposition that no completely blue object is simultaneously red, it seems to me that this proposition is true; this is an intellectual appearance (more specifically, an intuition). When I think about my most recent meal, I seem to remember eating a tomatillo cake; this is a mnemonic (memory) appearance. And when I think about my current mental state, it seems to me that I am slightly thirsty; this is an introspective appearance.

People accept intuitions, memories, visual appearances and other non-inferential knowledge all of the time. We could not operate without doing this, but when people start reasoning about philosophical principles they reject non-inferential knowledge outright. They think like a scientist, correctly recognizing that personal experience and appearances is not as good as studies and data for reaching conclusions about complex questions. But sometimes you have to think like a philosopher, ultimately you have to use your eyes to see things or your intuition to recognize logical truths.

A critique of this principle: https://doi.org/10.5840/jpr_2002_4

To be honest, most discussions of epistemology just hurt my head. Being an American I've probably imbibed more Scottish Realism than anything else ( https://en.wikipedia.org/wiki/Scottish_common_sense_realism ), but I haven't examined my own beliefs too deeply. As you say, it's impossible to live in the world if we are always having to justify every one of our beliefs. But, maybe there is a trust angle? As a Christian, I have to trust that God is able to communicate with me in a way I can understand, otherwise the whole Christian religion is an absurd lie.